Fintech Engineering Challenges

Having worked in financial services/fintech for most of my career I want to share some the software engineering challenges that are associated with tracking and moving money digitally. Most of these lessons have been learned the hard way. Hopefully they will be useful to anyone moving into the fintech space.

Note: I am intentionally only lightly touching the broad topic of "security" because that alone is a much longer article - however many of the items below are strong controls and help with security. Good design patterns always seem to compliment security as well.

Table of Contents

Significant Digits/Rounding

This is so critical that I once wrote a whitepaper on this topic alone. When floating point numbers (numbers that have a decimal point) are represented in binary, they lose precision. For example, ten cents (0.10) in binary is a repeating binary number that goes on forever like this:

0.0001100110011001100110011001100110011...

If you use floating point numbers to represent currency, computers will have to round them, and the rounding errors will add up and become noticeable, even substantial. Imagine starting with $10.00 USD and dividing it equally into three payments, rounded to two decimals - you will have three $3.33 transactions. Add them back up and you have $9.99, not $10.00. You just made a penny disappear into thin air (0.1% of the total value!).

Currency math is more closely related to integer math than floating point math. Rounding errors on addition and subtraction are not allowed, and division/multiplication should never create more accuracy than the original values. If money needs to be divided, and division isn't even, rounding should be apportioned according to well-understood business rules. In other words, in the example above you should end up with three transactions, two for $3.33 and one for $3.34. This is best accomplished with a well-tested currency math library in your chosen language. Do not attempt to write this yourself.

To represent money use at a minimum 64-bit integers. If we represent one US Dollar $1.00 as 100 cents (in order to use whole numbers - more on this later) 64-bit integers can represent something close to 184.5 quadrillion dollars. While it may not be an issue for many people, the upper limit of a 64-bit integer becomes restrictive when there is a need to represent values smaller than a cent. For example, in many countries, the price of a gallon/liter of gasoline requires three digits after the decimal point, and stock markets already require pricing increments of hundredths of cents, e.g., 0.0001. As you multiply to remove the decimal, the total amount you can store decreases.

Don't ignore inflation. You will need more significant digits than you think - design it in.

For the same reason, digital currencies are another use case for at a minimum 64-bit integers, where the smallest quantity of money can be represented on the order of micro-cents (10-6)… or even smaller. For these reasons a commercial developer of financial software has decided to use 128-bit integers!

Notes:

-

How you store monetary values is a key decision and has to align with your currency math library. For accounting applications it's very common to store values as integers (e.g., use

BigInt) in the database. For example, take the amount of the transaction (let's suppose $100.23) and multiply by 100, 1000, 10000, etc. to get the accuracy you need. If you only need to store USD just multiply by 100 (but note many currencies have more than two digits after the decimal). In the example, you would store the integer 10023. You'll save space in the database and comparing two integers doesn't have the gotcha's of comparing two floats. Many recommendDecimal(19,4). Some relational databases, such as Postgres, support aMoneytype but then you are wedded to that specific database. -

When using JSON to pass monetary quantities (e.g., from the frontend to the backend) use integers like Stripe and Square. Otherwise, consider putting the values in strings - you never know what the serializers and deserializers across languages will do to your numbers.

-

When processing currency values you need to strip currency formatting and standardize the decimal. Great Britain and the United States are two of the few places in the world that use a period to indicate the decimal place. Many other countries use a comma instead - and three digits after the decimal instead of two. The decimal separator is also called the radix character. Likewise, while the U.K. and U.S. use a comma to separate groups of thousands, many other countries use a period, and some countries separate thousands with a thin space. You need libraries that can encode and decode human-readable values for each currency. You also need to be able to convert currency codes to currency symbols.

-

This isn't specific to currency but sometimes what you think is a "number" isn't. You often need to use strings. If you need to store a bank account number like “01234567789” it will have the leading zero stripped if you use a numeric type.

Ledgers, Accounts and Transactions

The core concept of most financial systems is a current value which is changed via "transactions". We can model this as an "account" table and a "transaction" table. When a new account is created it has a value of zero. The account value is then updated via transactions (events) and reflects the current state. The transactions are stored for the lifetime as the account, along with precise timestamps so we know the sequence they were applied.

Martin Fowler calls this pattern Event Sourcing:

The fundamental idea of Event Sourcing is that of ensuring every change to the state of an application is captured in an event object, and that these event objects are themselves stored in the sequence they were applied for the same lifetime as the application state itself.

— Martin Fowler, Event Sourcing

We should always be able to start at zero, re-apply the transactions in order, and arrive at the same current account state. However, what happens if we have millions of accounts and billions of transactions? Can we easily and quickly validate our account values? Can we quickly spot errors?

This is where double entry accounting comes into play. Double-entry accounting originated in the 13th century in Italy to ensure the accuracy of banking and financial records. In a double-entry system, the amounts recorded as debits must always be equal to the amounts recorded as credits.

Double entry accounting also avoids the use of negative numbers. In theory, all balances and transactions are represented by positive, non-zero figures. If you design your system for only positive mumbers you reduce or eliminate some sources of error.

“Use a double-entry ledger” is probably the most valuable advice I could have sent myself prior to getting into fintech because it allows you to reconcile all your data all the time.

In fact, the term "balance" comes from double entry accounting - credits must always balance with debits. Thus, every transaction is balanced – it sums to zero. For example, if we sell a $100 widget but haven't been paid yet, we are owed $100, and we have $100 in revenue:

| Ledger Account | Debit | Credit |

|---|---|---|

| receivables | $100.00 | $0 |

| revenue | $0 | $100.00 |

| Balance | $100.00 | $100.00 |

To understand this further let's introduce some terms:

-

Ledger: A ledger contains accounts and journal (bookkeeping) entries. Every business has one ledger for itself internally. You can also have external ledgers. A bank account is an example of an external ledger.

-

Account: Ledger accounts categorize the money flowing through the ledger. It is modelled like a tree, with the topmost levels pointing to the balance sheet or profit-loss statement. The second level points to items on the reports. The lower levels are customizable. Examples of common ledger accounts are revenue, accounts receivable, and accounts payable.

-

Journal entry: this is a single record in the ledger, comparable to a row in a table. It reflects the actual money movement between ledger accounts. This is what we would consider a transaction, or Martin Fowler would call an "event".

-

Credits and Debits: A debit is an entry that is created to indicate either an increase in assets or a decrease in liabilities on a business's balance sheet. Credits, on the other hand, work in the opposite way - they are mirrors of one another.

If we are correctly handling transactions via a ledger, we can make sure we are always in balance. This is a critical control.

Reporting Periods

When reporting financial results, we have the concept of a period "close". For example, in an accounting system there are monthly, quarterly, and annual closes. The goal is that financial reporting should be immutable and idempotent: Close your periods and generate reports for them, and if/when you regenerate the report it must be identical.

Once a period is closed new transactions cannot affect it - it should be effectively immutable after being closed, except we must handle the edge case of re-opening periods.

If an "as of" (late) transaction arrives for a date/time in a closed period, the period may be re-opened and closed again after the transaction is posted. This can be quite complex as it also requires subsequent transactions to be reversed and re-applied, plus it requires restating the period results, and requires new financial reports to be issued. This is typically done for material transactions only.

For non-material transactions, the "as-of" transaction is posted to the first moment of the current open period instead. In this example the transaction date/time and posting date/time will be different. The transaction date could be September 23rd but posted on October 1st (the first day of the open period.) This also requires any subsequent transactions to be reversed and re-applied.

NOTE: some organizations operate on a cash basis vs. an accrual basis. Cash basis is simpler – you either do or don’t have the money. No unwinding required. Unfortunately, I've never seen cash basis accounting in any fintech.

Reconciliation

Reconciliation: A game designed to frustrate the player.

Reconciliation is a business process which arises from "leaks" in the pipelines that convey money between businesses or people (typically because of data quality issues, or network connectivity challenges). Because of this, humans spend a great deal of time every month reconciling incoming payments to invoices, or balances to banks accounts, or other money movement.

We can make this better. Money movement today is, after all, just a message between parties. Humans should not be involved in performing reconciliation. They should only be involved in investigating reconciliation issues/errors. My advice is:

- Design for guaranteed messaging where/if possible, or idempotency.

- Ensure high-quality structured transactional data.

- Reconciliation is complicated by the states that financial transactions may be in - use state machines to manage valid states and state transitions.

- Design and build automated reconciliation into your system from the start. Or there are many reconciliation engines available - you don't have to reinvent the wheel.

This lowers the total cost of payments by eliminating human effort, which is expensive, and human errors, which are also expensive (and numerous). An underappreciated consequence is that freeing people from drudgery gives them the ability to do more important, meaningful work.

Transaction States

Financial transactions have many different states. For example, credit card payment states can be authorized, cleared, voided, returned, declined, etc. It can be tempting to just go ahead an update the state on the transaction record. Don't do that! Instead, each state change should have its own immutable record, tracking transaction state changes over time.

You will need transaction state auditability. Auditors looking at a financial system need to trust that every entry in the ledger was made at a specific time and has not been manipulated in any way. “Updating transactions” would be better phrased to an architect as “use an append-only log / event architecture”. Also, it is very beneficial to design and build your transaction state libraries around a "state machine" that enforces the business rules of state changes.

Business rules are a pit of alligators, but some types of logical validation should be built into your transaction posting logic. If you sell widgets that range from $10.00 to $1,000 USD, should you expect to see a sales transaction for $10 million dollars? Or, one for a penny? At the very least these transactions should be flagged for review.

You should build data quality rules on top of your data storage. Data quality rules typically fall into one of six dimensions: accuracy, completeness, consistency, timeliness, validity, and uniqueness. Validity rules such as "can't be null", "can't be less than zero", and "must be in this range" can highlight suspect data quickly.

Immutability

I have mentioned immutable data like transactions and transaction state changes several times now. There are other key benefits to having data be immutable - as an example, ransomware cannot corrupt or encrypt immutable data. Also, immutable data can be optimized for "write once, read many" access so it can be more performant.

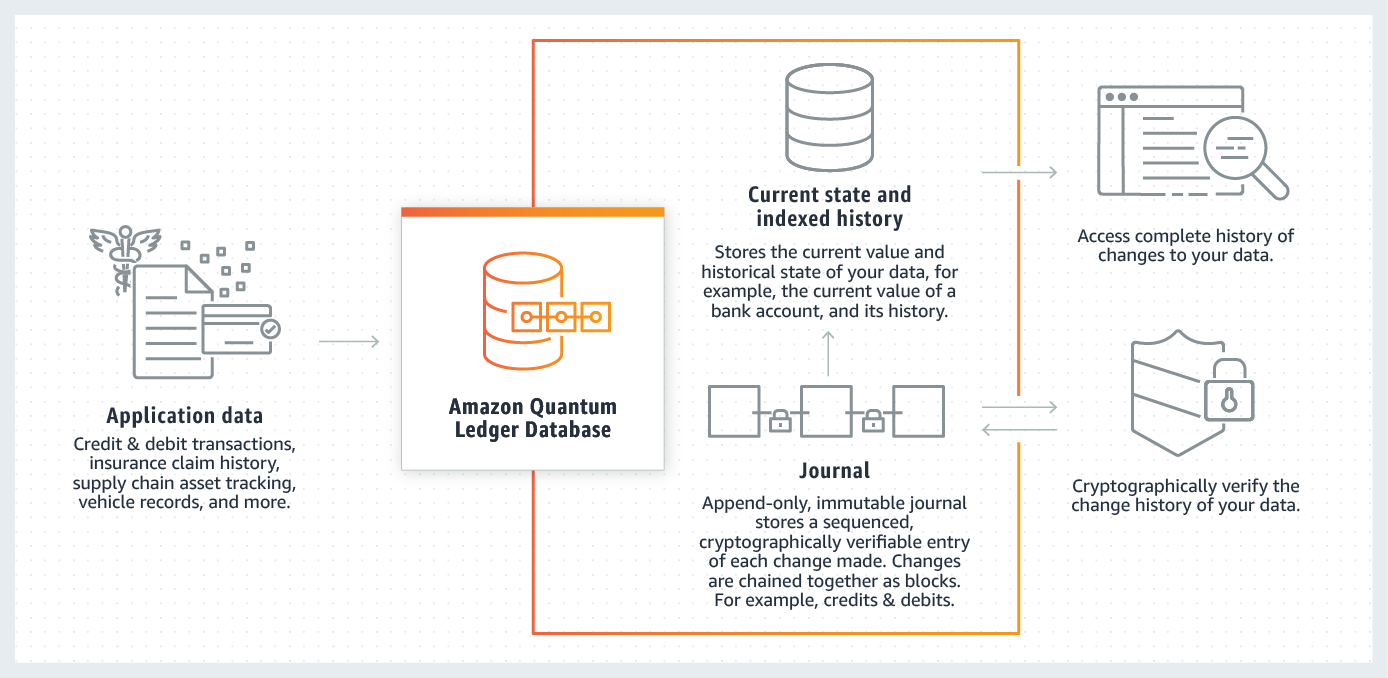

There are some great building blocks to use today such as AWS's Quantum Ledger Database. This enables you to track and maintain a sequenced history of every data change using an immutable and transparent journal with built-in cryptographic verification.

It works like this:

This also helps with auditability, information security, and controls. Auditors, CISO's and regulators love immutable data.

NOTE: "Forget me" laws (such as Europe's GDPR, or California's CCPA) do not apply to financial transactions. Otherwise, we would be creating money laundering havens. Other records retention laws come into play for banking, insurance and securities - you must keep what you need to keep, and you should be careful not to keep anything past the records retention necessary. How you store data must conceptually align with the records retention policy necessary. You need to design for the this up front.

Currency Codes

Never record an amount without its associated currency code. Use ISO standard codes. For example, the Swiss franc is represented by CHF – the CH being the code for Switzerland in the ISO 3166 code and F for franc.

Don't be tempted to use currency symbols - they not unique. The Australian dollar, Mexican Peso, Singapore Dollar, and US Dollar all use "$". Trust me - use ISO standard currency codes.

All amounts/values need a corresponding currency code, everywhere. In addition, transactions will also need accurate timestamps for many reasons (e.g., to find the most accurate exchange rate).

Time/Timestamps

Never record a transaction without an accurate timestamp. Timestamps and time synchronization are incredibly important in fintech applications for several reasons:

-

Transaction Ordering: Financial transactions need to be processed in the order they were initiated. This is especially crucial in high-frequency trading where trades are often made in milliseconds or microseconds. A small difference in timing could potentially lead to substantial financial gains or losses. Therefore, accurate time synchronization ensures order in the execution of these transactions.

-

Security: Accurate timekeeping helps in maintaining security. For instance, time-based one-time passwords (TOTPs) are widely used in two-factor authentication systems. These passwords are valid only for a short period of time and rely on synchronized clocks on the server and client side.

-

Audit Trails and Dispute Resolution: Timestamping transactions can help create a precise audit trail, which is critical for detecting and investigating fraudulent activities. In case of any dispute, a detailed and accurate transaction history backed by synchronized time can help resolve the issue.

-

Distributed Processing: fintech systems must scale, and in order to scale and maintain reliability they are architected as distributed systems. In distributed systems, time synchronization is important to ensure data consistency. Many financial systems are distributed over different geographical locations, and transactions need to be coordinated between these systems in an orderly fashion. This requires all servers to have their clocks synchronized.

Think of "currency" as three separate components: (currency code, decimal amount, and timestamp).

Other considerations:

- Use TIMESTAMPTZ. Always. The timestamptz datatype is a time zone-aware date and time data type. Furthermore, even for “date fields” consider using a timestamptz. Every date implicitly exists in a timezone, and if you ignore that you'll get bitten later.

- When you're using JSON to pass around datetime data, Use ISO8601 date and time with offset info, always. E.g., "transaction_timestamp": "2023‐06‐28T15:55:22.511Z".

- Use UTC everywhere, even when you find you can't use it everywhere.

- Another important one to think about is bitemporality. “Created at” vs “effective at”. Not obvious at first, and you'll need to build it in. Fowler has a good overview here.

Retry Logic

There are many places in fintech where we want "exactly once" transaction processing. Consider API calls: what if we were designing an API endpoint to charge a customer money; accidentally calling it twice would lead to the customer being double charged.

This is where idempotency keys come into play. When performing an API request, or a transaction, the client generates a unique ID to identify just that operation and sends it up to the server along with the normal payload. The server receives the ID and correlates it with the state of the request on its end. If the client notices a failure, it retries the request with the same ID, and from there it’s up to the server to figure out what to do with it.

- On retrying a connection failure, on the second request the server will see the ID for the first time and process it normally.

- On a failure midway through an operation, the exact behavior is heavily dependent on implementation, but if the previous operation was successfully rolled back by way of an ACID database, it’ll be safe to retry it.

- On a response failure (i.e., the operation executed successfully, but the client couldn’t get the result), the server simply replies with a cached result of the successful operation.

“Be careful with retry” should be more strictly “use idempotent operations” and link to the canonical Stripe article on idempotency.

Architecture

A well-designed architecture not only ensures optimal performance and scalability but also addresses critical concerns of security, compliance, and user experience. This article provides some clear, high-level advice.

-

Study existing architecture/code. Review anything you can get your hands on. For example, a great open source banking core having many of the strengths listed is Apache Fineract. Also check out the links in the References section below.

-

Model your business processes. One of the best to keep complexity in check is to model processes as state machines (with the state itself being persisted to DB). State machines can be formally tested. By modelling your systems, you will learn what the important failure modes are and you will get better at designing systems that are resilient and efficient.

-

Use formal methods. Harnessing the capabilities of formal software architecture design methodologies offers a structured approach to conceptualizing, designing, and implementing complex financial systems, ensuring robustness, reliability, and adherence to industry standards. Complex fintech projects involve multidisciplinary teams. Formal architectures provide a shared language and understanding, enhancing collaboration among developers, architects, domain experts, and business stakeholders. Incorporating formal architecture or design methods into fintech endeavors is an investment in long-term success.

-

Use proven design patterns. Queues, retries, event sourcing, payment state handling -- we live in a concurrent world with network failures and our system will need to gracefully handle outages. Vendors will have errors and outages too. Use design patterns that help with robustness and reliability.

Card payment systems are basically unreliable peer-to-peer messaging systems. Be prepared for a lot of complexity. Using an event-sourcing architecture is useful here for that "auditing" requirement and for debugging transaction state when the network sends you messages in error, out of order, or they forget to retry themselves when they promised to, when merchants send bad data, when POS systems do weird things, etc. Your partners will send you bad data - be prepared to validate all input data thoroughly and have strategies in place for when it's wrong.

-

Use well designed, tested, and trusted componentry. The libraries and SDKs you will need to use will become a large, critical part of your overall technology stack and some of these decisions will be very difficult to unwind if they don't work out. Knowing how to wrap and use third party libraries for things like DOCv step up, remote deposit capture, and security features is table stakes. If your stack choices make this challenging then you're going to have a hard time for a long time.

-

Automate Everything. Use "infrastructure as code" practices to define and deploy infrastructure in a repeatable, auditable manner. On the software side fully embrace continuous integration (CI) and continuous delivery (CD). CI/CD bridges the gaps between development and operation activities and teams by enforcing automation in building, testing and deployment of applications. Leverage DEVOPS best practices to manage environments, automate deploys and create consistency in dev, testing and product releases.

-

Formalize your automated testing strategy and code coverage expectations. It's nearly impossible to be successful with lightweight, informal testing strategies employed by busy software teams. Yes, you will be tempted to "move fast" and use prototypes, etc. But if you don't build in robust automated testing from the start along with methods of managing test data you will have a hard time. You need automated regression testing as a minimum.

Other considerations:

- Maker-checker is a powerful concept. Embrace it to the fullest across your system.

- You will be dealing with all sorts of non-standardized financial integrations. A lot. Think adapter pattern as early as possible.

- You will likely be answering to multiple regulatory agencies. Create boundaries between them within your system and reduce the surface of compliance as much as possible.

- Regulatory agencies will inform (define?) your records retention policies - know these before you design your data storage layer.

- Be paranoid about race conditions / serialization anomalies.

- Use immutable storage where possible (this also protects from ransomware).

Identity Management

Financial systems attract fraud. One of the best ways to combat fraud is having robust ways to determine that someone is who they claim to be, and that they are a human. Identity management is the bedrock upon which the entire financial ecosystem is built, ensuring the overall robustness of the ecosystem. Identity theft, account takeovers, and fraudulent transactions are just a few examples of the threats that financial institutions face. Identity management acts as a bulwark against these threats.

By establishing a reliable and secure means of confirming identities, financial institutions can confidently interact with each other, customers can trust the services they receive, and regulators can ensure compliance with relevant laws and regulations.

Start with OAuth 2.0 - the industry-standard protocol for authorization. Understand the threat model and attack scenarios you will experience. Use multi-factor authentication and in the future, adopt passkeys.

Fintech firms also have "know your customer" regulations, and using OAuth 2.0 is not sufficient to guarantee you are dealing with a specific human. This is where a partner like Socure can help - this takes lots of design and build time to get right. My advice is not to do this yourself.

Testing and Release Management

Deciding how to configure environments — dev, test, prod, etc. — and how to ensure they are running properly can be rough in any organization. In fintech, when real money moves on prod, and core functionality depends on numerous third-party integrations, it is very challenging. This article is not the place to explain how to do it, but there are three common mistakes to avoid.

-

Many companies have a confusing or poorly documented path from test to production for third party integrators. Make this path clear, and while you're at it, do a risk analysis on whether it could ever be possible for a third party to connect to the wrong environment without catching their own mistake.

-

Plan for every important configuration of your production environment to be testable. Testability might require multiple test merchants and test accounts that move real money. You never know when you need to double check that the plumbing is working. Face it: You Test in Prod.

-

An often-overlooked requirement is to be able to ensure that the production environment is doing error handling properly. The thing about production systems is that they're not supposed to have errors, so you can't see how they perform under error conditions unless you can force errors to happen. If you have more courage than I do use something like Netflix's chaos monkey to force validation of failover.

Application Monitoring

Customers: not the best early warning system.

Your application will need a full suite of application metrics that need to be defined up front and engineered into the application. Deploying to a public cloud like AWS means that some infrastructure monitoring can be added quickly and almost as an afterthought, but application monitoring cannot. Engineers should know when systems fail before anyone else, especially customers.

On day one of your launch, the CEO will want to know things like how many transactions are being processed and what their total dollar amount is. Another thing that tends to happen early in the life of a fintech is that there will be a drop-off between signups and usage, and someone on the product team will start asking if users are experiencing errors. Be ready with good application monitoring to be able to answer these kinds of questions.

What metrics signal fraud attempts? From day one you will have to be vigilant about fraud patterns in your application. Make sure you are monitoring user activity for the inevitable questions that will be coming your way.

References

- Double-entry accounting for software engineers

- Storing Money and Float Precision

- Event Sourcing

- Decimal and Thousands Separators

- Books, an immutable double-entry accounting database service

- Double-entry Bookkeeping for Programmers

- An Engineer's Guide to Double-Entry Bookkeeping

- Things I Wish I Knew Before Building a Ledger

- Twisp - The core accounting engine to power any financial product

- An Elegant DB Schema for Double-Entry Accounting

- Uber Ledger on DynamoDB and S3

- Reconciliation: A game designed to frustrate the player

- 64-Bit Bank Balances ‘Ought to be Enough for Anybody’?

- The Color of Money

- Event Sourcing, CQRS and Micro Services: Real FinTech Example from my Consulting Career